WELCOME TO SCALABILITI

We're a UK Collective, doing our bit to help increase that distribution, by bringing the future that already exists to a wider audience.

London Tech Jobs at Career 2.0

- An error occurred while fetching this feed: http://careers.stackoverflow.com/jobs/feed?searchTerm=&location=London&range=20&ajax=1

TAG CLOUD

HPC FlashForward - Nvidia, Mellanox and Intel

We've been a great fan of the FlashForward series, which finished it's current series in the UK last night. Trying to guess what the writers have in store for us, having already told us, is an interesting challenge.

With the International Supercomputer Conference (ISC) in full swing, we're also starting to see companies start bring to market products they've been promising for some time.

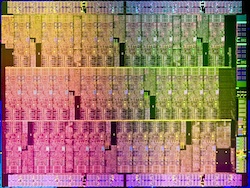

Single Core bad, Many Cores good

The first is Intel's attempt to regain much of the ground lost to GPU's, especially in the absence of the now-cancelled Larrabee programme. It's now known that much of the Larrabee advances have found their way into the new Many Integrated Core (MIC) architecture Intel is promoting.

Using Intel's new 22nm fabrication process, the idea is to scale the first product, know as 'Knights Corner', to 50 processing cores in a single chip. This a massive increase over Intel's current dual and quad capable chips, and exceeds AMD's current Phenom 6-core offering. It is however important to note, and not widely reported, that these are not standard x86 processors. You wouldn't be running Windows on them. They are custom chips, but will have a C++ x86-type SDK which should make translation between existing x86 code, and the new MIC code, faster than normal. Intel is hoping that this familiar tooling will drive adoption within the HPC industry. We'll be particularly interested to see how easy it is to run the same data processing cores (x86 vs x86-MIC) on the same system. There may be the opportunity to chose which is the best location (x86 vs MIC) to run a particular job, without having to worry too much about code conversion or optimisation.

GPU without CPU

The second piece of news is around a combination of the Nvidia GPUDirect technology and the Mellanox ConnectX-2 InfiniBand Adapter. Once of the major problems we always face is connecting disapartive systems together. We can have multiple GPU's in a single unit (7 in once case), providing fast access via the system's PCI Express bus. However, once you get more than one system, you have the problem of message passing and control across units. On the cheap side, we've always gone with multiple Gigabit network ports per unit, connected to a low-latency switch, but we've also had customer with InfiniBand switched fabric environments.

However, both of these interconnect techniques operate at the system level, not the individual device level (such as the GPU). The solution for multiple systems with multiple GPU's still required the system CPU to be responsible for the initiation and management of memory transfers between the GPU and InfiniBand network. The requirement for the system CPU to be involved in this communication (together with the need to copy the buffer memory) creats bottlenecks in the performance of the whole system.

Nvidia's GPUDirect, when partnered with Mellanox's ConnectX-2 technology removes the need for system CPU communication. They are currently claiming an immediate 30% decrease in communication costs between GPU-to-GPU systems. Overall performance gains, especially in systems that use parallel execution, is claimed at having a 42% increase, and this appears to be without any software optimisation. It's purely hardware.

These are just two of the types of technological developments that make us excited to be in this industry. As always, we'd welcome you're insightful comments on these areas.

—-

Feedback and comments are welcome to steven.algieri@scalabiliti.com